Generating new Typefaces using AI

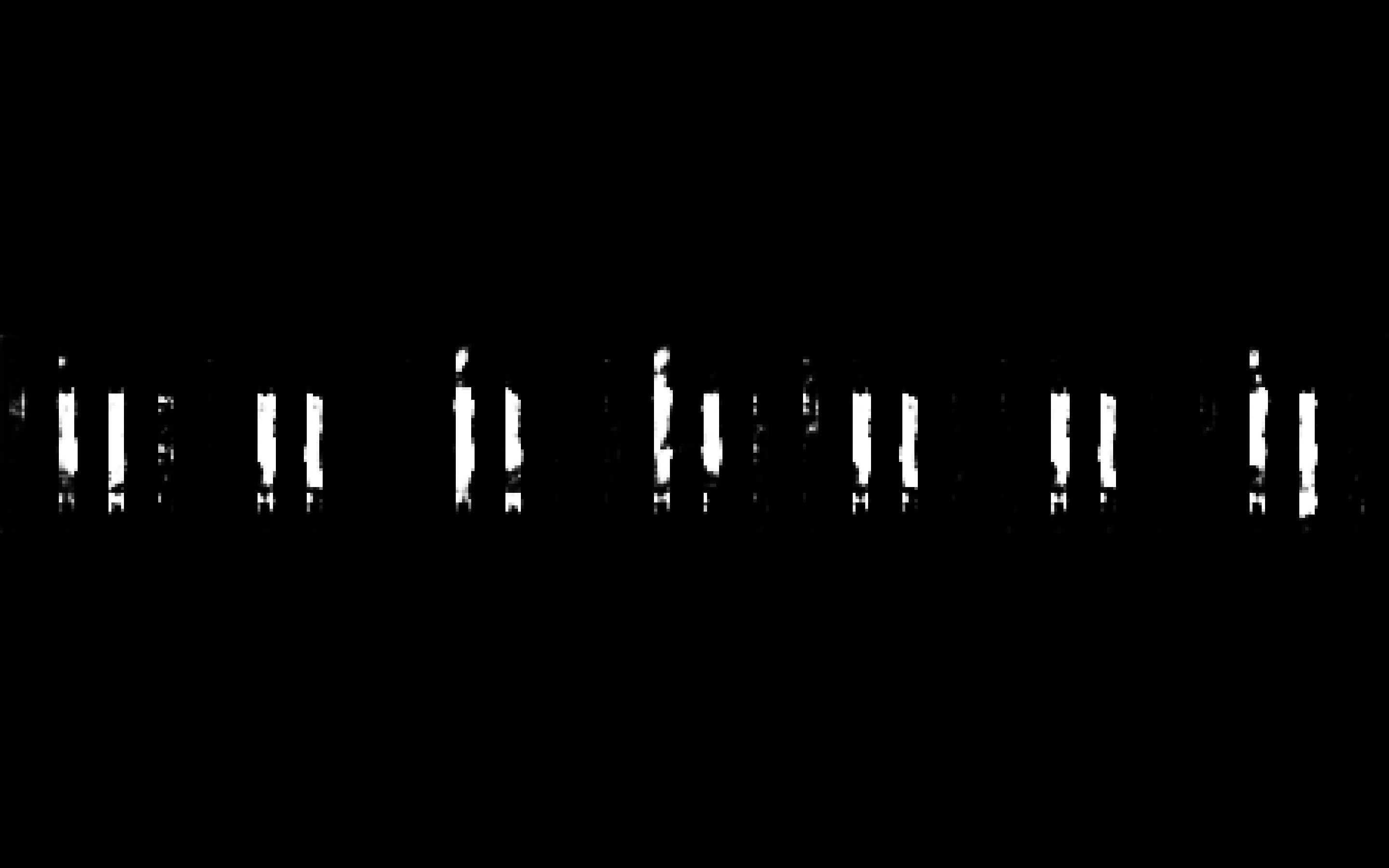

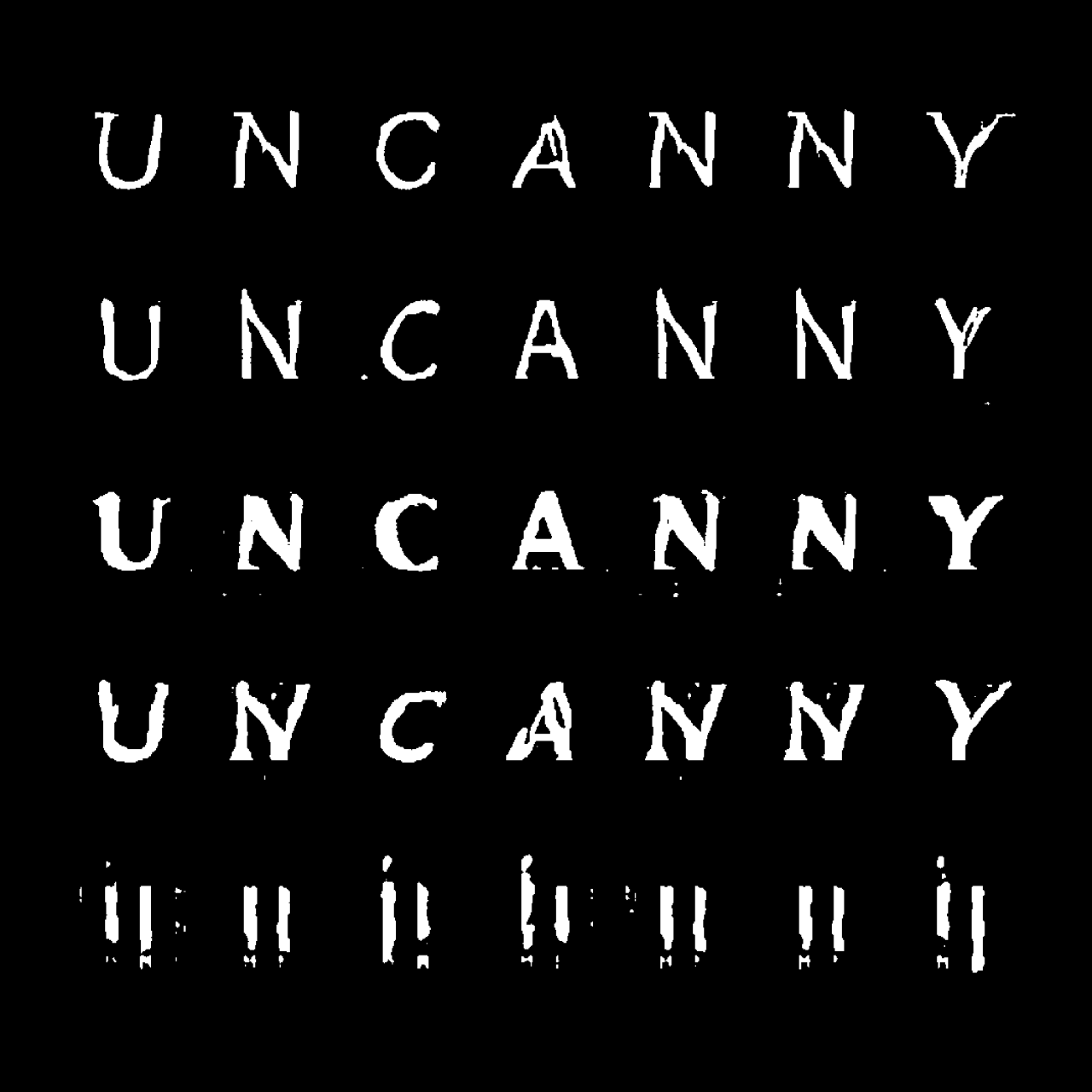

The Aifont is a system that generates new typefaces. These were generated using a custom DCGAN trained on a large amount of images containing text. The generated output is showing a new typeface per frame—and an oscillation between different styles and kinds of typefaces, if you put one output frame after the other as a sequence.

AI-driven typeface generator

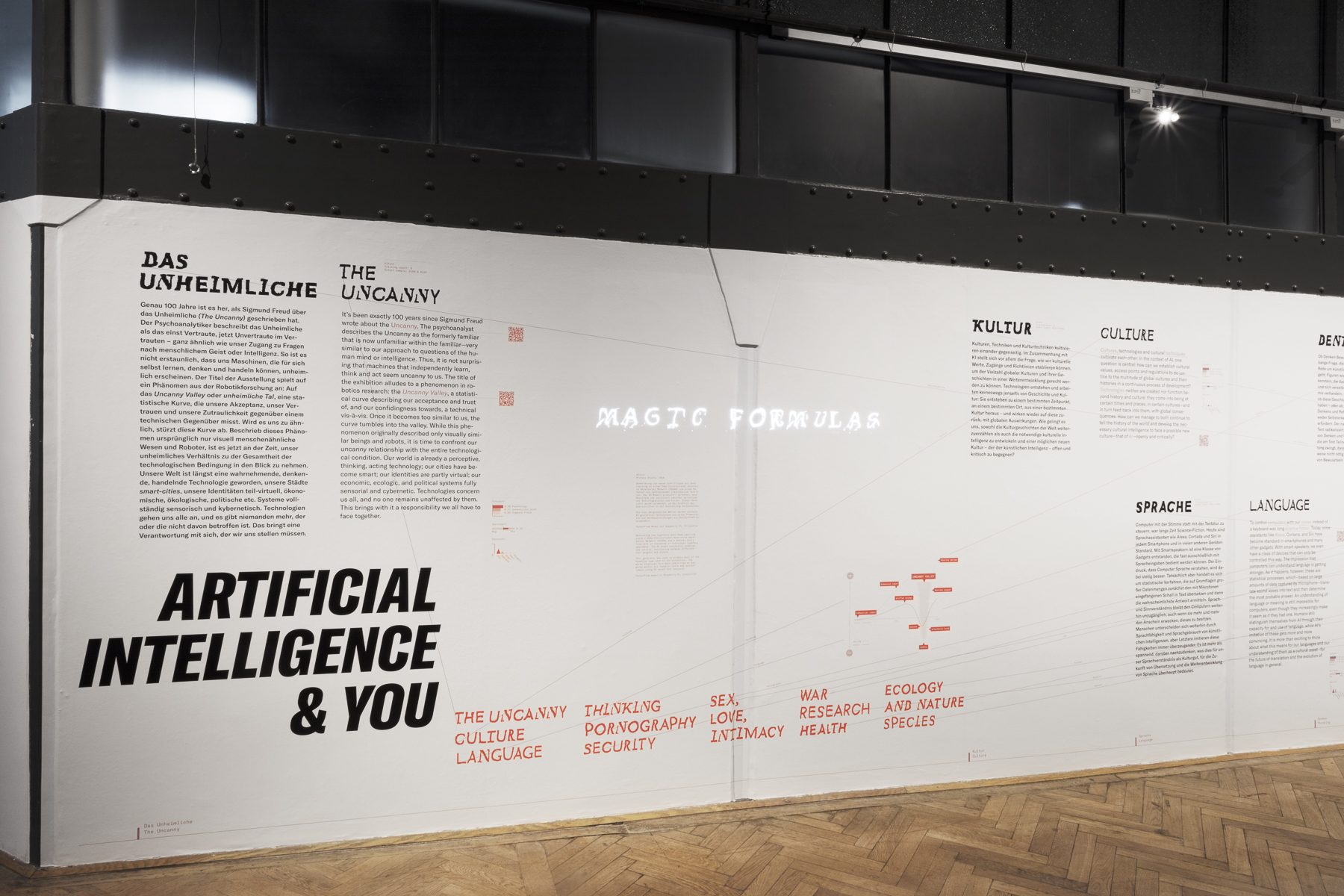

Together with the AImoji, the Aifont is the part of the visual identity of the exhibition UNCANNY VALUES, an exhibition at the MAK in context of the VIENNA BIENNALE FOR CHANGE 2019. It’s a generative artwork and a process—rather than a single still frame.

Variants of the typeface were used extensively throughout the exhibition’s display and were presented as a separate installation.

From the VIENNA BIENNALE 2019 brochure:

The Viennese design duo Process Studio has developed the exhibition’s visual, multimedia imparting of knowledge as well as the communication of UNCANNY VALUES in public spaces. As computer scientists, they worked their way into the technical foundations of neural networks and, with the help of a so-called Generative Adversarial Network (GAN), developed especially for the VIENNA BIENNALE 2019 a unique form of communication that truly never existed before. GANs are groups of algorithms for unsupervised learning. They are currently used, for example, to produce photorealistic pictures of people who never existed or deceptively real sounding, machine-generated texts that can be used for fake news. The implications and risks of such a technology are correspondingly wide-ranging.

Process Studio have confronted their GAN with a very special reality and language: all of the emoji currently in existence. As an international, universal visual language that is first and foremost about expressing emotions, emoji are uncannily suited to such a process. We can watch the GAN learning, trying to interpret what the usually yellow and round entities that make up a world actually are. Furthermore, we can watch it as it reacts to it, what supposed emotions it derives from it and creates anew. This results in thousands of AImoji, which emerge slowly and uncannily from behind white noise and begin to communicate with us. We do not yet know what they want to say to us but the conversation has begun. Some may seem monstrous to us, and it is unclear what emotions they are trying to express. What begins to become clear, however, is that when confronted with them we have to rethink and adjust our habits of reading the world. Using the same method an endless stream of new typefaces which oscillates between different font weights and styles was created.

#uncannyvalues

#aifont

Use Aifont for your project!

You can purchase Aifont for your project. Have a look here!